Chaos Engineering is a burning topic right now & has never been more relevant within the community. While several factors have contributed to this over the better part of a decade or so (the evolution of devops, sre practices, the testing-in-prod revolution), one of the single biggest factors for all the eyes that this discipline is getting is the emergence of the Cloud-Native paradigm. Microservices, Containerization, Kubernetes and a highly vibrant ecosystem around it (both tools and people) have highly influenced how applications are built, deployed and maintained. And chaos engineering is an important part of this structure.

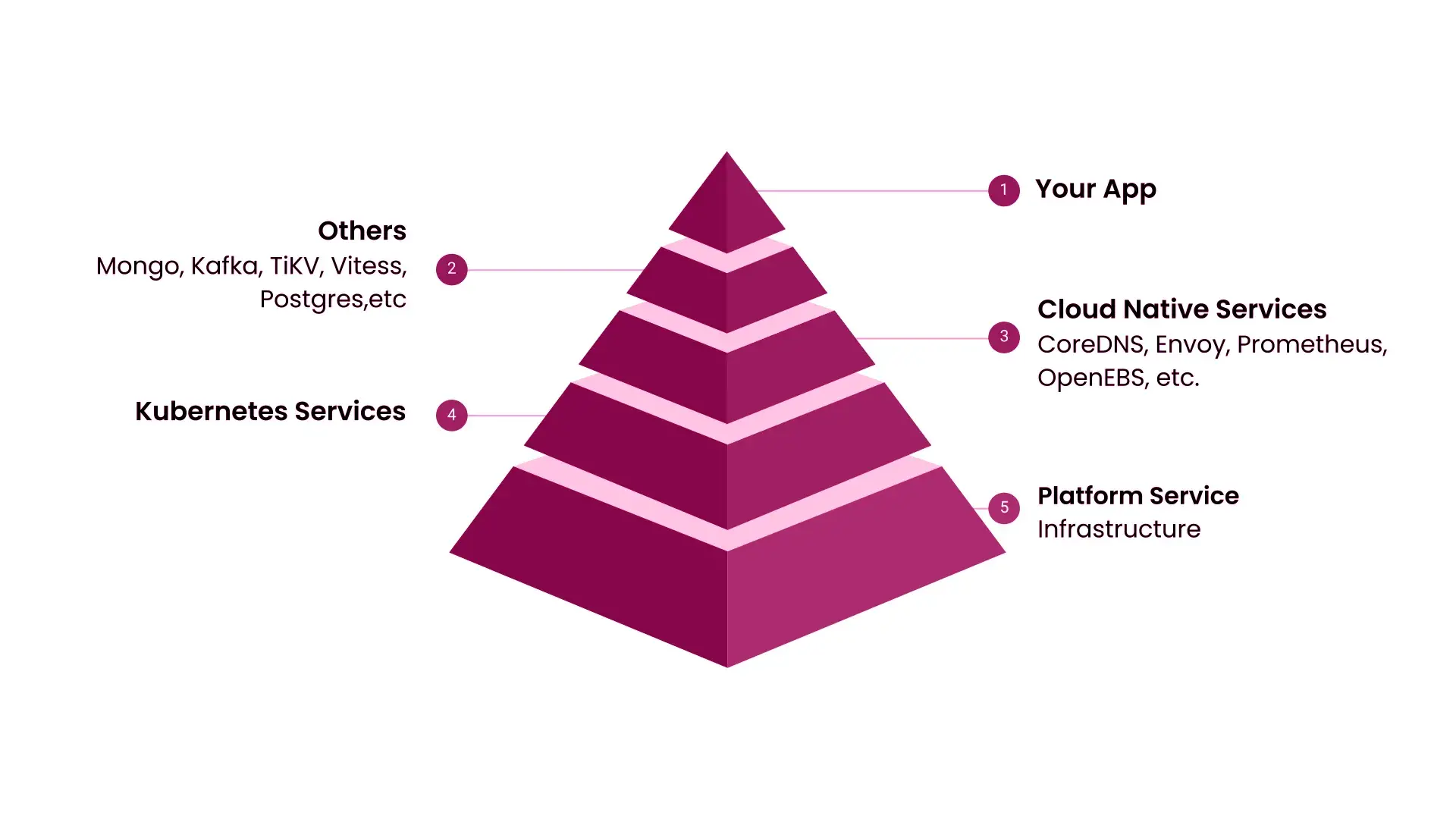

At the outset, it is easy to see why. Simply put, today, the resilience of the business applications or services depend not only on how well it is built, but also on how the deployment environment (composed of several interdependent microservices) works. Right from the underlying platform, Kubernetes services, ecosystem services (such as for service discovery, storage, observability etc.,) direct application dependencies (databases, message queues/data pipelines) followed by the user-facing services. While this is not to say legacy environments or monolithic software doesn’t need chaos engineering, the current model offers more “chaos surface area”, i.e., multiple points of failure.

Add to this the efforts enterprises are making as part of their digital transformation initiatives - which involve re-architecting applications, migration to Kubernetes and setting up the complex multi/hybrid cloud strategies to avoid vendor lock-in, and you have enough variables at play to make DevOps teams uneasy. Chaos Engineering works as an apprehension slayer and increases confidence in the software delivery model by simulating outage and giving previous hours to engineering up to cover all bases.

The single initial motivation we had was to provide Cloud Native engineers (of all kinds - app developers, devops engineers, test automation engineers, SRE/ops engineers ) the same user experience for chaos experimentation and resilience evaluation that they had while dealing with all else on Kubernetes - application lifecycle management, storage definitions, security policies etc., that of using declarative constructs placed in a YAML to define chaos intent & leverage the Kubernetes API itself. This spawned multiple implicit requirements: the chaos business logic needed to be containerized, the chaos definition needed to be a Kubernetes (cusOften, building sub-categories of existing practices/models is an exercise fraught with comparisons, declarations of opportunism and scrutiny. However, in some cases it is acknowledged, and then solidified by wide acceptance. At ChaosNative, we recognized the need for a cloud-native perspective and approach (in design, implementation and usage) to chaos engineering in a Kubernetes-driven world.tom) resource with appropriate reconciliation logic (controller), the chaos experiments needed to come under the purview of Kubernetes RBAC and finally, the chaos artifacts (for various faults) needed to be presented and made consumable in a simple, centralized manner. The result was the Open Source project LitmusChaos (you can track it’s journey here).

Along the way, we came to associate more than just the above characteristics to cloud native chaos engineering, to make it truly effective for all the stakeholders. While these are faithful in principle & modeled on original principles of chaos, they lend a cloud-native context to them.

However, while the aforementioned original principles talk only about the practice of chaos, per se - what we put-together can also be applied to describe toolsets and platforms that implement cloud native chaos engineering.

The sheer diversity of workloads (both application as well as add-on/infra services) one encounters in Kubernetes is mind-boggling. At the same time, there are a host of failures possible on platform services (cloud, on-premise). The use cases are an ever-evolving entity. It would be difficult for any platform to claim solution completeness in terms of fault injection and other auxiliary capabilities. Open Source is the way forward to achieve this. Needless to say, it is important for the platforms to provide a simple interface for the community to exercise these available faults and also contribute ones for usage by the world (something that Litmus facilitates using the ChaosHub). This aids in building up a comprehensive database of “Real World Events”

The ease and simplicity of adding use cases also leads us to the next principle.

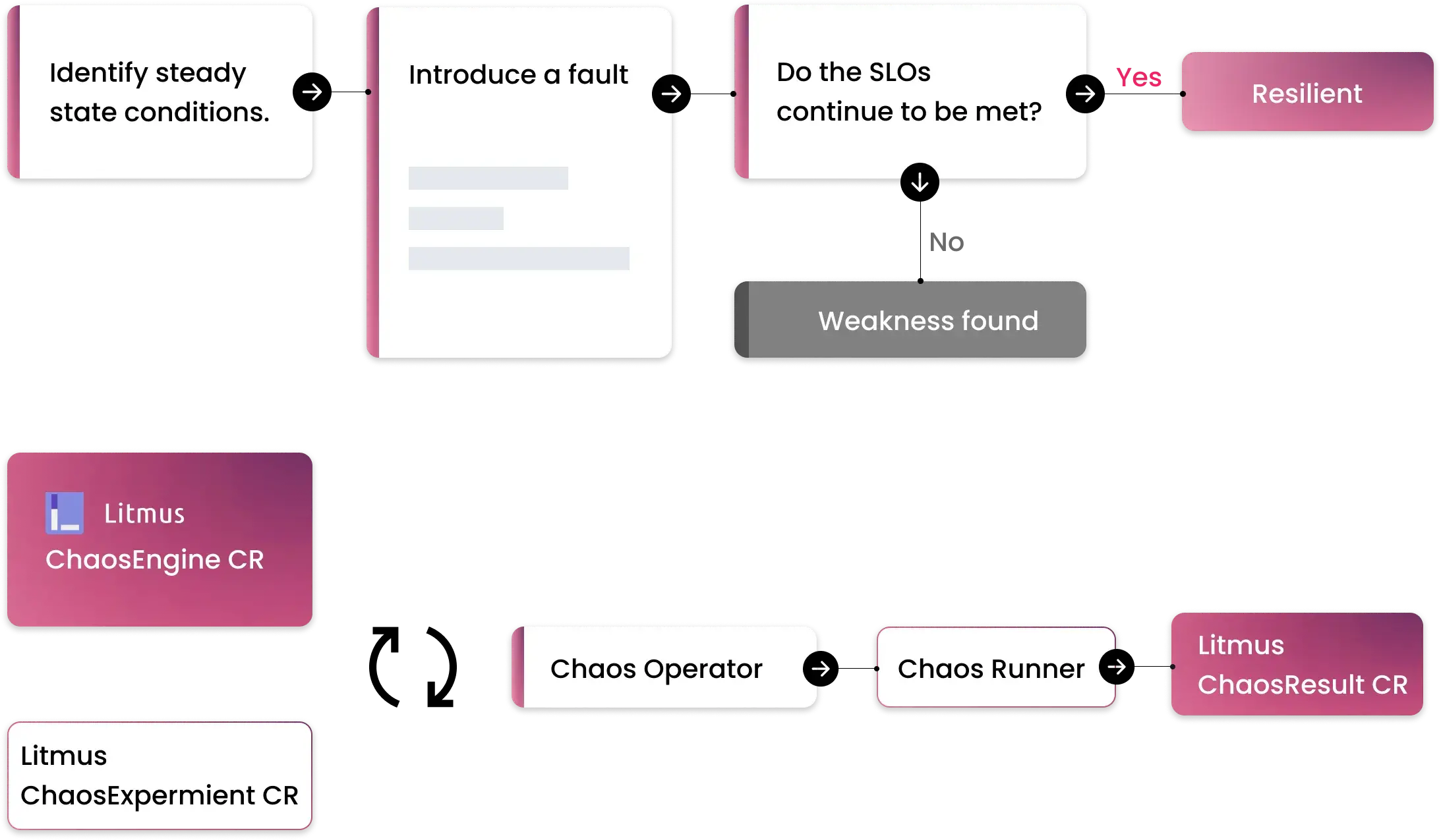

We spoke about encouraging the community to contribute more faults, real world failures and use cases in the previous principle. It is necessary to standardize the operating characteristics or the lifecycle of the experiment and publish the APIs to achieve this, while still retaining the chaos business logic brought forward by the community. This makes the process of building, running and recording chaos experiment results consistent, thereby allowing devops teams to build tooling/scripts and integrate into their CD pipelines seamlessly. Litmus enables this by using Kubernetes CRDs - which are themselves an API standard for chaos definitions & details a BYOC (bring-your-own-chaos) model wherein folks can wrap their chaos business logic into a container image defined within a custom resource. The Litmus control plane can then orchestrate and manage this experiment regardless of the nature of the fault. These definitions involve specifying the target application/service or infra-component - which allow the benefits of “minimizing blast radius” and controlled failure injection.

It is important for chaos experiments to be aided by a solid “hypothesis around steady state”. These are often directly mapped to the SLOs set for the application services. This hypothesis can be quite diverse - and might encompass observing and measuring anything from application metrics, infra component states, availability of downstream services, resource states (esp on Kubernetes). Along with chaos intent, the steady state hypothesis needs to be declarative too. Especially, when experiments are run in an “automated” fashion, these behaviours need to be validated over course of experiment execution. On the other hand, it is also necessary for the experiments to generate useful information to co-relate with app or infra behaviour - which include logs, events and metrics. Once again, the interface to define these observability parameters need to be standardized - enabling the community to plug-in diverse sources of data. Litmus enables this via Probes and Chaos Metrics that can be used to create chaos-interleaved dashboards

GitOps: One of the immediate impacts of the cloud native culture is the practice of integrating chaos into continuous delivery - mainly driven by the need to test resilience in prod-like staging environments before promoting changes to production. Chaos Experiments, defined as they are in a declarative manner, should lend themselves to be stored in Git and referenced/executed upon specific triggers that characterize the CD flow. Chaos can be injected via dedicated “stages” in a pipeline or via “automated” updates on the cluster using GitOps controllers. Litmus facilitates this through integrations with different CD frameworks like Keptn and ArgoCD via relevant plugins and control plane services.

That the aforementioned principles are well-acknowledged is proven by the various chaos tools and projects that have come up in the recent past. While many have adopted individual tenets, Litmus is well-poised to grow as a comprehensive platform that encompasses all the described points.

ChaosNative is committed to advocating and enriching the principles of Cloud Native Chaos Engineering through sponsorship of the Litmus project and participating in / driving discussions at various open forums in this space.